Artificial Intelligence: The Tool or the Teacher?

With the increasing sophistication of technologies, the question arises: Is artificial intelligence biased? Do algorithms discriminate, and is it a flaw of the system or of its creator? This question leaves the problem to the external, what we don’t control and can’t possibly know; however, bias in the hands of people is potentially the most dangerous. What if the issue is not what or how AI learns from us, but that we are unknowingly learning from it?

In a study on biases between human and artificial intelligence, participants were asked to perform a simulated medical diagnosis task. There were two groups; some worked independently, and the others were assisted by an AI system. This AI system was deliberately programmed to be biased, making systematic errors. As expected, the participants followed the AI’s flawed recommendations while they were using it. Unexpectedly…in the absence of the AI, those people continued to make the same mistakes, without prompting. They had internalised the bias.

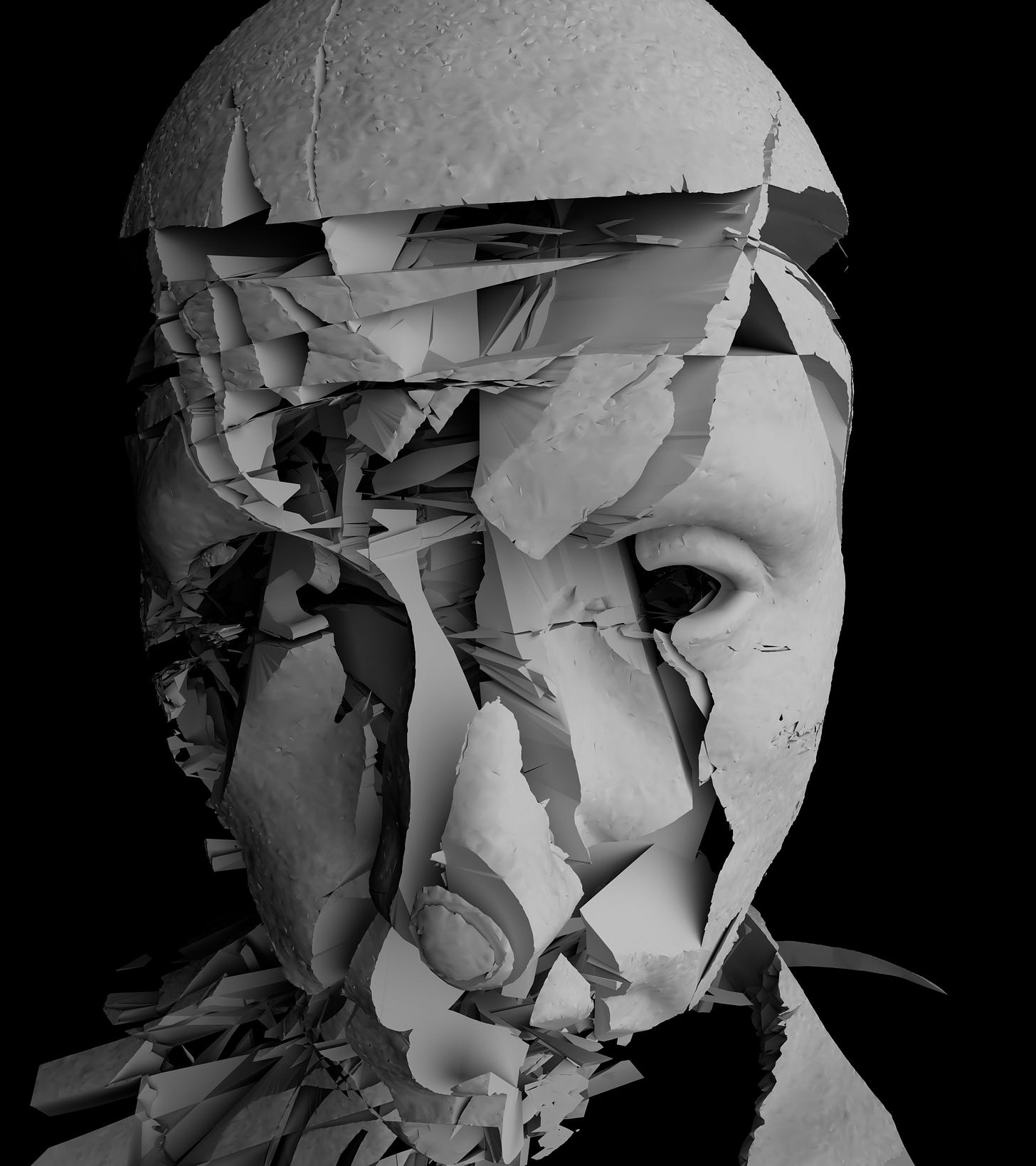

Using AI as a tool has become a markedly usual experience, but the problem lies in calling it a ‘tool’. A tool is used for a purpose; it does not train or teach. Outsourcing thought, on the other hand, is where the lines blur between a tool for controlled use and a tool for reliance. We don’t simply use AI; we absorb it. Once the shift happens, an algorithm is no longer needed. The bias has migrated from the system to the self.

We pride ourselves as independent thinkers, that tools are supports, they don’t change us. This is not about technology, but identity. When we start to trust a tool to make a decision, it doesn’t just influence our own decisions; it rewire how we decide. Persistently.

There’s a loop at play. Humans create biased data, AI learns that bias, and, naturally, outputs it. This research points to a secondary loop that occurs after the initial one: AI outputs bias, humans absorb it, reinforce it, and then new data is even more biased than before. A closed, self-reinforcing circuit that is pretty much invisible and deeply human.

This is not just observed in the laboratory setting; it’s occurring in hiring decisions, performance reviews, medical judgments, and the average person’s ‘quick checks’ on ChatGPT. It’s not just that AI might be wrong; it’s that the more habitual this becomes, the less we stop to question what we are told, continuing to carry errors forward as if they were our own insights. AI gives answers with confidence. These answers are clean, structured, and immediate, and that’s enticing when life is usually so ambiguous, especially in high-stress roles and situations. The more we trust in confidence, the less we trust in ourselves. Eventually, the version of self and machine align.

In the middle of this, we lose curiosity, doubt, and friction. We lose the psychological mechanisms that push us through the uncomfortable pause before we make decisions, where deep thinking occurs. Remove that discomfort, and all that is left is imitation painted as efficiency.

Where most organisations are trying to optimise AI use, they are not considering the damage it’s doing to the way their employees think, or how these decisions are impacting the workplace culture, or how innovation may be starting to dissolve. There’s a new ethical dilemma being encouraged, optimising workload by using AI is affecting a human’s behaviour, the way their brain is wired, ultimately to the downfall of both the individual and the institution.

How often do we disagree with an AI’s recommendation? And how often is that disagreement acted upon? Where in our daily lives do we mistake confidence for accuracy? We don’t need fewer tools; we simply need more awareness. Look at how we react to what AI tells us, and if we are blindly following.

From a depth psychology perspective, this phenomenon is not surprising; it’s revealing. We encounter AI as a new surface to project upon. Where previously, we may have projected authority onto our leaders, institutions, or experts, we now project it onto machines. AI becomes a modern oracle; it’s disembodied, driven by data, and seemingly objective. The psyche, unfortunately, does not distinguish this as clearly as we’d like to think. When we defer to the machine, we both outsource our cognition and engage in an act of identification. The external authority is internalised, like parental voices or cultural norms, which become part of the superego. Over time, this can dull our capacity for individuation (the process of becoming psychologically whole). Instead of wrestling with ambiguity, we are offered ready-made interpretations. The risk is not dependence in a functional sense, but rather the breakdown of our inner authority. If we no longer trust our own judgement, what, exactly, is doing the judging?

For the full research paper, published in Scientific Reports, 2023, find the link here: Humans inherit artificial intelligence biases | Scientific Reports